NVIDIA Run:ai

NVIDIA Run:ai is a GPU orchestration platform that schedules and manages AI and machine learning workloads across Kubernetes clusters. vCluster is a certified Kubernetes Distribution for hosting NVIDIA Run:ai that lets platform teams share GPU infrastructure across isolated tenants without provisioning separate physical clusters for each team.

Compatibility has been verified with the following versions.

| Component | Version |

|---|---|

| Kubernetes | v1.34 |

| vCluster | v0.31 |

| NVIDIA Run:ai | v2.24 |

Deployment models

vCluster supports all three deployment models with NVIDIA Run:ai:

| Model | Description | Use case |

|---|---|---|

| Shared nodes | Tenants share Control Plane Cluster nodes with label-based scheduling. Each tenant gets a separate tenant cluster with its own Kubernetes API. | Trusted tenants, cost-efficient GPU sharing |

| Private nodes | Each tenant gets a dedicated tenant cluster with auto-provisioned private nodes. | Untrusted tenants, strict compliance requirements |

| Standalone | A single-tenant deployment where NVIDIA Run:ai manages the full node pool within one tenant cluster. | Single team, dedicated GPU pool |

Installation

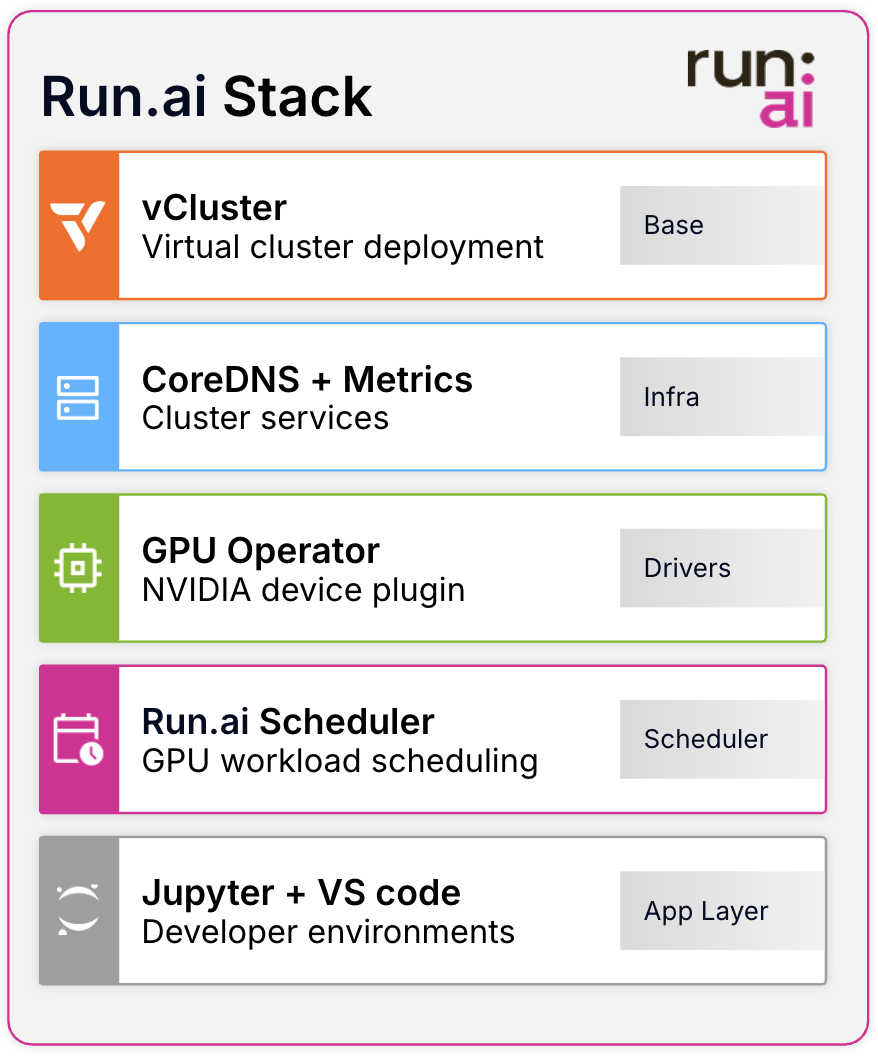

The NVIDIA Run:ai certified stack provisions a vCluster together with the NVIDIA GPU Operator and NVIDIA Run:ai in a single, tested deployment. See the certified-stacks repository for prerequisites, configuration options, and step-by-step setup instructions.